The Cast of Characters

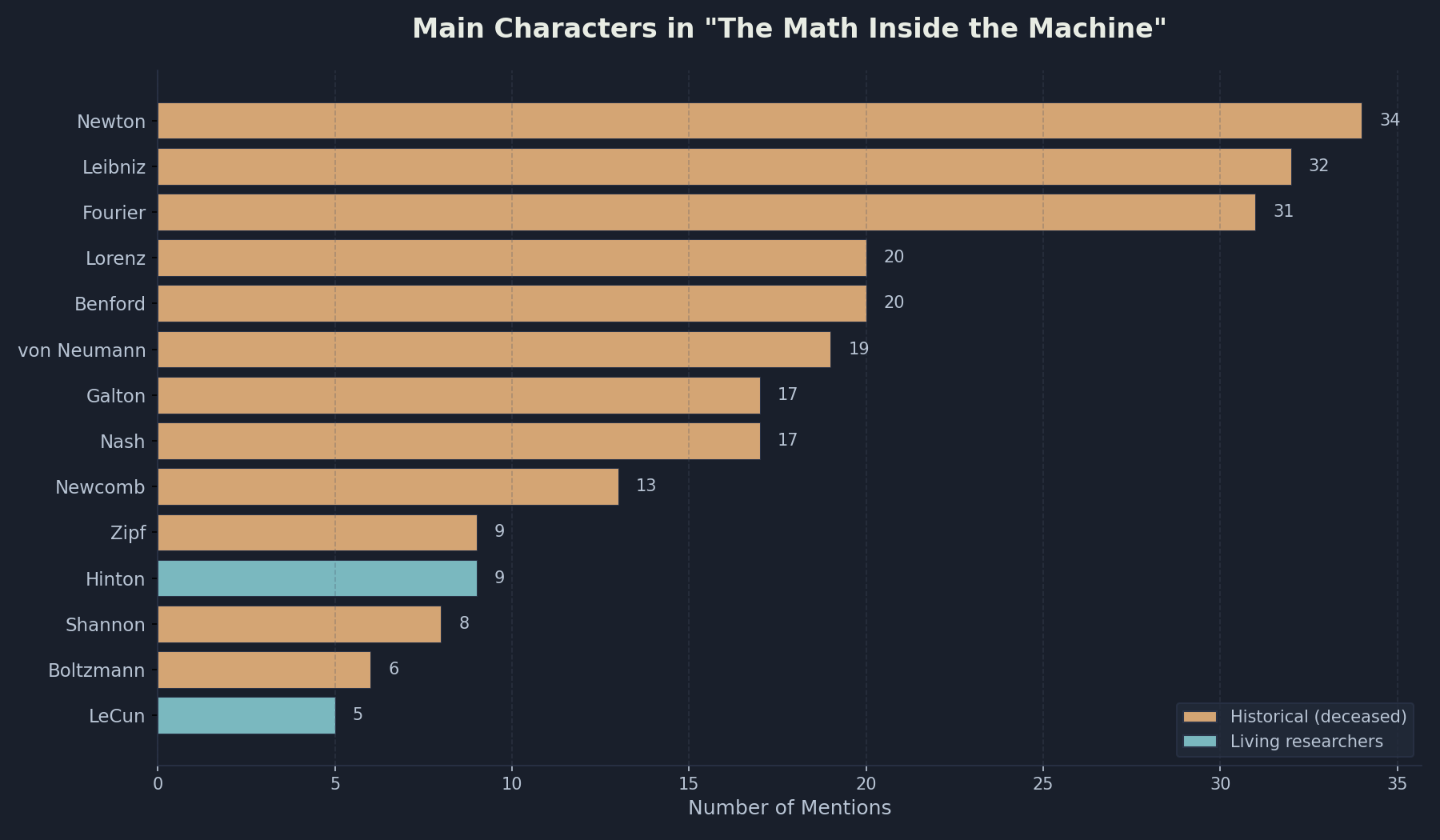

A data-driven look at who shows up most often in the book—from 17th-century rivals to Nobel laureates still making headlines.

Every book about ideas is also a book about people. The Math Inside the Machine is no exception. While the subtitle promises eleven simple operations, the pages are populated by the mathematicians, physicists, and computer scientists who invented them.

I got curious: who shows up most often?

So I did what any author with leftover Claude Code tokens and a shower thought would do. I asked Claude to write a spaCy script to extract named entities from the manuscript and count them. The results surprised me—and revealed something about how the history of AI is really the history of mathematics catching up with itself.

The Top Three: A 17th-Century Rivalry

Newton and Leibniz dominate the chart. Between them, they account for 66 mentions—nearly twice as many as anyone else. This makes sense: the chapter on derivatives tells the story of calculus, and that story is inseparable from the bitter priority dispute between these two men.

Newton invented calculus first, probably by a decade. But he kept it secret. Leibniz invented it independently and published it openly. Newton accused him of plagiarism. The Royal Society—with Newton as president—sided with Newton. Leibniz died in disgrace, buried in an unmarked grave.

Here’s the twist: Leibniz won the long game. His notation—dy/dx—conquered mathematics. Every time a neural network computes a gradient, every time backpropagation nudges weights toward lower loss, the symbols on the page are Leibniz’s. Newton’s dot notation survives only in corners of physics.

The third-most-mentioned person is Joseph Fourier, who dominates the chapter on trigonometry. Fourier was a French mathematician who accompanied Napoleon to Egypt, caught a disease that left him perpetually cold, and invented a technique for decomposing complex signals into simple waves. That technique—the Fourier transform—now powers everything from audio compression to the attention mechanism in transformers.

The Strange Pattern of Discovery

Something interesting emerges when you look at the distribution by chapter:

- Derivatives: Newton and Leibniz, the inventors of calculus, plus Geoffrey Hinton and Yann LeCun, the inventors of modern deep learning

- Logarithms: Frank Benford and Simon Newcomb, who independently noticed that real-world numbers follow a peculiar pattern

- Comparison: John Nash and John von Neumann, who turned strategic reasoning into mathematics

- Iteration: Edward Lorenz, who discovered chaos while trying to predict the weather

The chapters cluster people by what they discovered, not when they lived. Newton (1643-1727) and Hinton (born 1947) appear in the same chapter because they worked on the same problem: how to compute the rate of change. Three centuries apart, they’re mathematical neighbors.

This is part of what makes AI remarkable. The operations inside modern language models were invented by people who had no idea their work would one day teach machines to write poetry. Leibniz didn’t know about GPUs. Fourier didn’t know about tokenization. But their mathematics works anyway.

Living Characters

Two names on the chart are highlighted differently because their subjects are still alive: Geoffrey Hinton and Yann LeCun. Both won the 2018 Turing Award for their work on deep learning. Hinton won the 2024 Nobel Prize in Physics. Both have been called “godfathers of AI,” though Hinton has recently become famous for warning about the technology he helped create.

There’s something strange about writing history that’s still happening. I imagine Hinton reading the chapter where he appears alongside Newton and feeling the weight of that juxtaposition—or perhaps rolling his eyes at it.

What the Numbers Don’t Show

The chart counts mentions, not importance. Some figures appear briefly but cast long shadows. Claude Shannon—the father of information theory—appears only eight times, but his 1948 paper might be the most consequential single document in the history of computing. Ludwig Boltzmann appears six times, but his equation connects entropy to probability and explains why softmax works at all.

And then there’s George Kingsley Zipf, who discovered that word frequencies follow a power law. He appears in the first chapter, and his law explains why tokenizers work the way they do. Nine mentions. Outsized influence.

The Story Beneath the Math

The mathematics has a history, and the history is full of rivalries, tragedies, and improbable connections.

Newton kept calculus secret out of paranoia. Boltzmann killed himself after years of attacks on his theory. Lorenz discovered chaos by accident when his printer rounded a number. These aren’t footnotes. They’re the texture of scientific progress.

The book follows eleven operations from their origins to their roles in modern AI. Along the way, you meet the people who invented them. Some were geniuses. Some were lucky. All of them contributed something essential to the systems that now draft emails, generate images, and occasionally say things that sound like understanding.

If you want to know what’s really happening when you talk to an AI, you could read the technical papers. Or you could start with the people. Either path leads to the same place: the unreasonable simplicity of intelligence, built from operations you learned in school.

The cast is larger than the chart shows. But these are the main characters—the names that echo through the mathematics of AI.